Section 06 - The Encyclopedia

GPU/VPU

An abbreviation for

graphical processing unit or

visual processing unit this term refers to the chip that provides the video functionality to the computer as a whole. This chip can be present on a discrete card or it can be embedded into the platform as an integrated solution. To avoid confusion, the rest of this guide will refer to this chip as GPU

Video Card

Technically speaking this term refers to a physical expansion card that plugs into the motherboard and provides video capabilities to the system. Used more conversely, the term 'video card' generally refers to the GPU present in the system whether it is a discrete expansion card or not. To avoid confusion, whenever the term videocard is used in this guide, unless otherwise stated, it will refer to a discrete expansion card.

Integrated Graphics/Onboard Video

In order to support a discrete videocard, a computer must have existing expansion slots: providing these expansion slots, from the manufacturer's perspective, has two impacts on the system: [1] increases the cost of the motherboard and [2] increases the cost of the system as a whole (since the system will then have to feature a videocard). To cut these costs, computer manufacturers resort to integrating the video controller directly into the motherboard: the end user has a video display, and the manufacturer makes cuts the most expenses. From a consumer perspective, having integrated video solution generally means less expandability and less performance as a whole (since the video memory will draw from the main system memory, thus reducing the amount of memory available to the system as a whole) however there are reasons sometimes to opt for intagrated video:

- Low end baseline machines: ideal for people who just need a basic computer to type documents, check email, etc

- HTPCs (home theater PCs): watching movies doesnt require an exhaustive amount of graphical processing power .. a simple onboard video solution is sometimes sufficient

- Terminal/office type machines: secretarial type computers do not need massive processing power and nor do public access machines (i.e., library)

Interfaces: PCI, AGP, PCIE

The performance of a videocard is limited by what interface is using: some interfaces provide more bandwdith than others and as such, make a better choice for videocards (and especially gaming cards which are heavily bandwidth dependent). A quick breakdown of interfaces, past and present:

- PCI. An abbreviation for peripheral component interconnect, this is a 32bit interface (there are 64bit PCI slots available however they are generally used in server environments). Even as videocards moved to faster and better interfaces, PCI videocards still have a very useful purpose in troubleshooting.

The PCI interface operates at 33Mhz and thus offers 133MB/s of bandwidth (33MHz x 32bits ÷ 8bits/byte = 133MB/s). There are numerous revisions and spin-offs of the PCI interface however such innovations are generally not videocard oriented and as such wont be covered here.

- AGP. An abbreviation for accelerated graphics port, this 32bit interface was developed in order to better facilitate faster and more bandwidth intensive videocards. AGP operates at 66MHz and as such offers a baseline of 266MB/s of bandwidth (66MHz x 32bits ÷ 8bits/byte = 266MB/s). There does exist a 64bit specification for workstation class cards however that's outside of the scope of this 101.

For all intents and purposes, AGP is a dedicated PCI connection just for videocards: the advantage of AGP is that eliminates the latencies in accessing the system memory and processor: whereas PCI devices need to negotiate their way to these resources, an AGP device has direct access to these resources. As a sidenote, many times people will refer to AGP as a type of BUS: this is in fact, technically incorrect as a BUS is supposed to facilitate multiple devices: AGP only facilitates a single video device. This is, of course, a very trivial technicality and in general converse, overlooked.

Different AGP specifications provide varying amount of bandwith:

- AGP 1X. 266MB/s (66MHz x 32bits ÷ 8bits/byte)

- AGP 2X. 533MB/s (66MHz x 32bits ÷ 8bits/byte x 2double-pump)

- AGP 4X. 1066MB/s (66MHz x 32bits ÷ 8bits/byte x 4quad-pump)

- AGP 8X. 2133MB/s (66MHz x 32bits ÷ 8bits/byte x 8oct-pump)

Over the years, the AGP specification has been refined and newer revsions have been introduced with increased performance at each stage

- AGP 1.0. This specification supported 1X, 2X and used a AGP3.3v physical connector

- AGP 2.0. This specification supported 1X, 2X and 4X and used a AGP1.5v, AGP3.3v or AGP Universal physical connector

- AGP Pro 1.x. The pro moniker designates that these videocards are destined for highend workstations and often employed in drafting/animation/studio environments. This specification supports 1X, 2X and 4X and used an AGP Pro3.3v, AGP Pro1.5v and AGP Pro Universal physical connector

- AGP 3.0 This specifcation supports 1X, 2X, 4X and 8X and uses an AGP 1.5v physical connector

- AGP Pro 3.0. This specification is an expansion on the AGP Pro 1.x and supports 1X, 2X, 4X and 8X; it uses a AGP Pro 1.5 physical connector

Something that may be confusing are the various voltage values being thrown around along with various speed grades and the possibility of incompatability. To clarify, there are two types of voltages used with AGP videocards: key and signalling.

- The key-voltages are associated with the physical connector (i.e., in the above list, "AGP 1.5v physical connector" indicates to us that the key-voltage is 1.5 etc (the only exception is that "AGP Universal" has no key-voltage).

- The signal voltage is the voltage that is associated with the speed ratings of the card:

- AGP8X uses 0.8v

- AGP4X uses 1.5v or 0.8v

- AGP2X and AGP1X uses 3.3v or 1.5v

With the somewhat overwheliming set of voltages, differing types of voltage, keys, Pro vs non-Pro and various specifications, things can get a bit confusing: as such, there is a fallback: all the devices are physically made such that you can only insert them into compatible sockets (with reasonable force).

- PCIE. An abbreviation for PCI-Express (and sometimes abbreviated as PCIEx16), this specification, formerly known as 3GIO, PCIE is a very high-speed serialized interface which can be somewhat 'parallelized' by grouping 'lanes' of PCIE (or PCIEx1) together. Each lane provides 250MB/s with video devices having access to either 8-lanes (2GB/s) or 16-lanes (4GB/s) (more on this later in VFAQ)

Shader Models: Pixel Shaders, Vertex Shaders

Firstly, to preemptively clarify a very common misnomer: a shader is

not a hardware 'thing' but rather, a shader is simply

code. It is a specific type of code that affects the pixels or vertices of a 3d object. To reiterate: video cards, in the context of pixel/vertex shaders, do not "come with shaders".

As just mentioned, a shader is simply a block of code that allows for a game developer to add geometric and/or lighting transformations to a 3d object before it is finally rendered and seen by the end-user. Vertex shaders are available to augment the T&L features (and consequently, any vertex shader programs are run roughly when T&L effects are applied) as well as performing geometric deformations. Pixel shaders are run after all the geomtry has been finalized generally concern themselves with texture, lighting and other surface-related effects.

Over the years, several revisions of the shader models have evolved

- Shader Model 1.x In contrast to DirectX7 class games/hardware where the game developers experienced "flexibility" by means of a series of toggle-able special effects. DirectX8.x changed things slightly by allowing the game developer to do whatever they wanted within an input and output point so long as the hardware supported the said code (i.e., there were enough hardware registers present to actually execute the desired shader op).

- Shader Model 2.x Introduced with DirectX9, the SM2.0 and later SM2.x feature set allows game developers to pretty much do everything that gamers have been used to for the last few years: SM2.0 increased the minimum number of registers required (thus increasing the length of the shader programs and indirectly, the complexity). The last major change that SM2.0 brought was looping! No longer do game developers have to write mass procedural programs (which were then limited by the low register counts) as well as some basic [static] branching. The later revisions (i.e., 2.0b) were, for all intents and purposes, effectively, SM3.0 (but without the extended maximum shader length -- which hasn't yet been realized by game developers yet)

- Shader Model 3.0. The current shader model revision, SM3.0 is essentially a "flexibility extention" on SM2.0b. The major additions include dynamic branching, increased color precision requirements and texture lookups

- Shader Model 4.0 At this point it seems that SM4.0 is looking to merge the two shader blocks into a single coherent block (so that shader enhancements will apply to both pixel and vertex shaders) as well as integer vs FP memory addressing adjustments

Shader Pipelines

A shader pipeline is essentially a dedicated hardware path for a shader program to run: having more such paths allows for more simultaneous programs to run (and thus there is an overall performance increase as the number of pipelines is increased). For the most part, the effects seen in games effects the world after all geometric data has been processed (i.e., after the vertex shaders have done their work) so there is generally more of a benifit to increase the number of pixel processing pipelines as cards become more advanced (this also explains why there are almost always more pixel pipelines than vertex pipelines).

SLI/Crossfire and other Multigpu configurations

3dfx: where it all started

SLI, originally introduced by 3dfx with their Voodoo2 cards, stood for

scan line interleave and it was just that: two video processsors would work towards rendering a frame: one GPU would process the odd lines and the other would render the even ones. 3dfx's implemented SLI using two cards connected by a dongle (which suffers from timing issues since the scene reconstruction occured after the video had been sent to the RAMDACs) as well as having two GPUs on the same board. For the most part, when 3dfx dissapeared, SLI somewhat dissappeared.

nVidia

More recently, nVidia has ressurected the idea of multi-GPU rendering (they weren't the only bunch to do so, just the only one really successful at it). As things currently stand, SLI stands for

scalable link interface. The principle here is the same: take to identical and compatible video cards (that is, same make and model), link them up and have the two cards jointly render a scene. There is a theoretical performance increase of 100% however it will never hit that mark due to load-balancing overhead).

A few intrepid manufacturers like Gigabyte and ASUS have gone a step further and put two GPUS and two sets of memory together on single card (i.e., single card SLI). Also, with the latest driver revisions (ok, for some time now), SLI no longer requires that the cards be exactly identical: in fact, the individual cards can be run asynchronously of each other (also, the PEG link is no longer required per se however not using it comes at a roughly 5% performance cost).

ATi's competing technology, Crossfire is essentially the same principle however ATi's crossfire is significantly more 'open'. Whereas nVidia's SLI requires an NF4SLI chipset to be paired with two identical cards for SLI to be enabled, ATI's solution works across multiple chipsets (RX200 and i955X) and two identical cards are not required. In short, Crossfire is slightly more flexible and lenient as far as product selection goes but at the end of the day, as far as the consumer is concerned, it's essentially the same deal. As noted above, SLI has become more flexible as of late however its interesting to note that Crossfire was developed with the flexibility in mind.

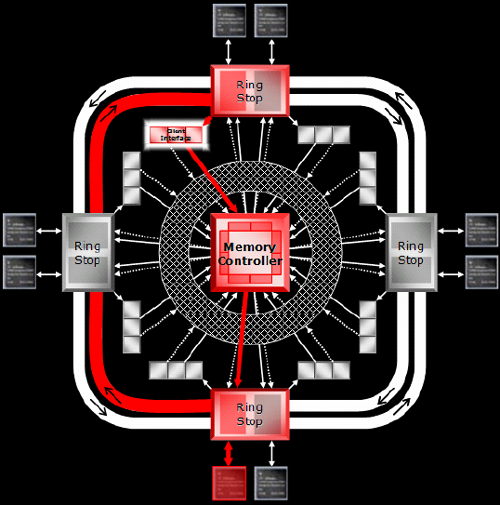

RingBus

This is ATi's new fancy 512-bit memory controller architecture which was designed with very high clockspeeds and futureproofing in mind. The quick and dirty explaination of how it works is that there are two 256bit memory paths that data can traverse with four stop-points (at each of those points, the controller can access two memory modules). This means that traversing between memory and the memory controller and the cache is done extremely efficiently -- which may be the explaination behind why the Radeon X1800 cards can compete so well against their GeForce7800 counterparts even though they suffer a pipeline defiecit. Again, a picture can quickly convey the idea how how things operate

HyperMemory & TurboCache

The easiest way to cut costs on budget cards is to use less 'stuff' (i.e., fewer pipelines, a smaller memory bus, less memory etc). This works however, up to a point after which the cost savings no longer outweigh the performance hits. One method of further reducing costs without dropping the performance significantly is by leeching off the system memory (and thus reducing the amount of memory that is physically included on the card).

For an AGP card this would result in a massive performance hit since the bandwidth is optimized for one-way transfers however PCI Express is a full-bandwidth bi-directional interface and so this [leeching the system memory] is now possible with only minor architecture changes. To the end user, the performance

if a card with Hypermemory/TurboCache is comparable (although less than) to the same card with the same amount of actual physical memory present. Generally speaking, it's advisable to avoid purchasing such cards since comparable cards (which dont leech off the system memory) are available for the same price bracket.

Video Memory: [G]DDR, [G]DDR2, [G]DDR3?

To clarify a few things about naming conventions and such within the context of video memory,

- DDR=DDR1=GDDR=GDDR1

- DDR2 = DDR-II = GDDR2 = GDDR-II

- DDR3 = DDR3 = GDDR3

There seems to be something of a consensus to use DDR, DDR-II and GDDR3 to denote the different types of memory and as such we'll use them here. As a side note, the reason they tack on the 'G' is to denote that we are talking about

graphics memory rather than normal system memory (the reason for the distinction is because video and system memory are quite different and the extra letter allows us to avoid confusion)

As far as performance goes, there's no difference between the different types of memory: they are all "DDR" meaning that they will all do double-data-rate (meaning that for each clock pulse, data is sent on both the rising and falling portions of the pulse as opposed to the older types of memory which only sent data once per pulse). The difference is however that DDR-II and GDDR3 are capable of higher clock speeds with GDDR3 using a lower signalling voltage and thus not suffering the heat issues encountered with DDR-II. Of the three, as expected, GDDR3 is the most advanced and for those concerned with overclocking and squeezing the most performance from the card. will be the memory type of choice.

Refresh Rate/Response Time

These are measuires of the performance of a display device: the refresh rate measures the number of times a

CRT will refresh per second with higher values being superior. Response time is a measure of performance for

LCD displays. Although not technically accurate you can 'translate' a response time into a refresh rate using the following conversion:

Approximate Refresh Rate Equivalent = 1000 ÷ Response Time

DSub15, DVI, RCA, Coax, SVideo

DSub15 and

DVI are the two common connectors found on videocards which allow for users to connect CRTs and LCDs to them. High end video cards often feature or or more DVI connectors but users with CRTs that dont interface with DVI, a

convertor needs to be used.

For videocards featuring more videoIn and videoOut connectors,

RCA or

S-Video may be used. Both RCA and S-Video are established media connectivity formats with S-Video being the superior of the two.

Vsync

An option present in many games, vsync or

vertical synchonization means that the frames being drawn on the screen will coincide will the actual refresh of the display device. Disabling vsync generally allows for higher framerates however since frames are being generated regardless of whether or not the user sees them or not, sometimes there are artifacts. Enabling vsync will limit these artifacts and flickering.

VIVO

An abbreviation for Video-In Video-Out this simply means that the card supports both input and out to/from external video sources. Most videocards will feature some form of VideoOut which allows you to output what you see to a TV or a VCR etc. VideoIn is the exact reverse: it allows you to capture video coming in from a TV or VCR or other similar device. VideoOut is often a standard feature on videocards and such wont add much of a cost to the device; videoin however will add significantly to the cost of the device and as such, if you're on a budget, make sure you want these features if you select a card with VIVO

RAMDAC

An abbreviation for RAM Digital-Analog-Convertor, these devices convert the internal digital signal to an analog form that analog monitors can interpret (digital displays do not need this extra processing). The performance of a RAMDAC is given in MHz with higher ratings allowing for higher resolution + refresh rate combinations. For the most part, avoid buying cards with RAMDACs rated less than 350MHz.